Gadget Glory Fastest Firsts and Biggest Bytes

Quiz Complete!

Gadget Glory: Fastest Firsts and Biggest Bytes

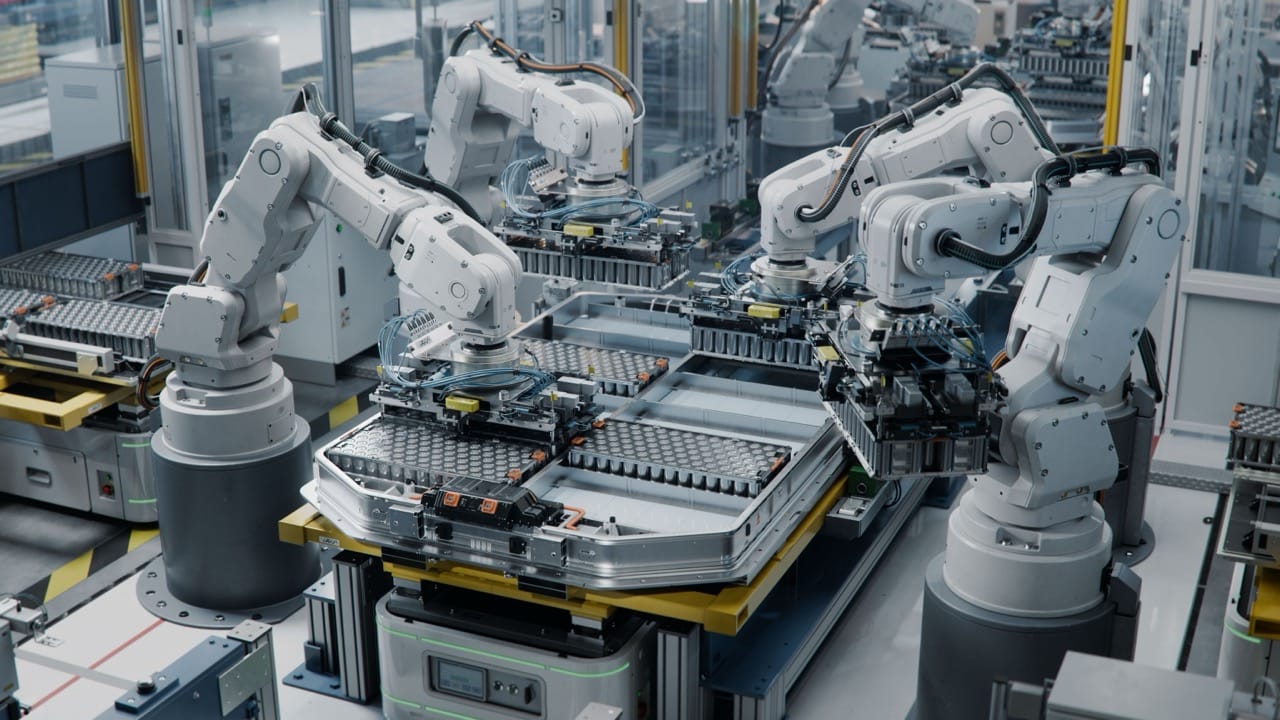

Technology history is packed with moments when someone did a thing for the very first time, and then the rest of the world spent decades trying to make it smaller, faster, cheaper, and more widespread. Early electronic computers were not sleek gadgets but room-sized machines built from thousands of vacuum tubes, consuming huge amounts of power and demanding constant maintenance. Yet those bulky pioneers proved an idea that still drives modern life: information can be processed automatically at speeds no human can match. From there, the race for extremes became a recurring theme, from first programmable machines to the first computers sold for personal use.

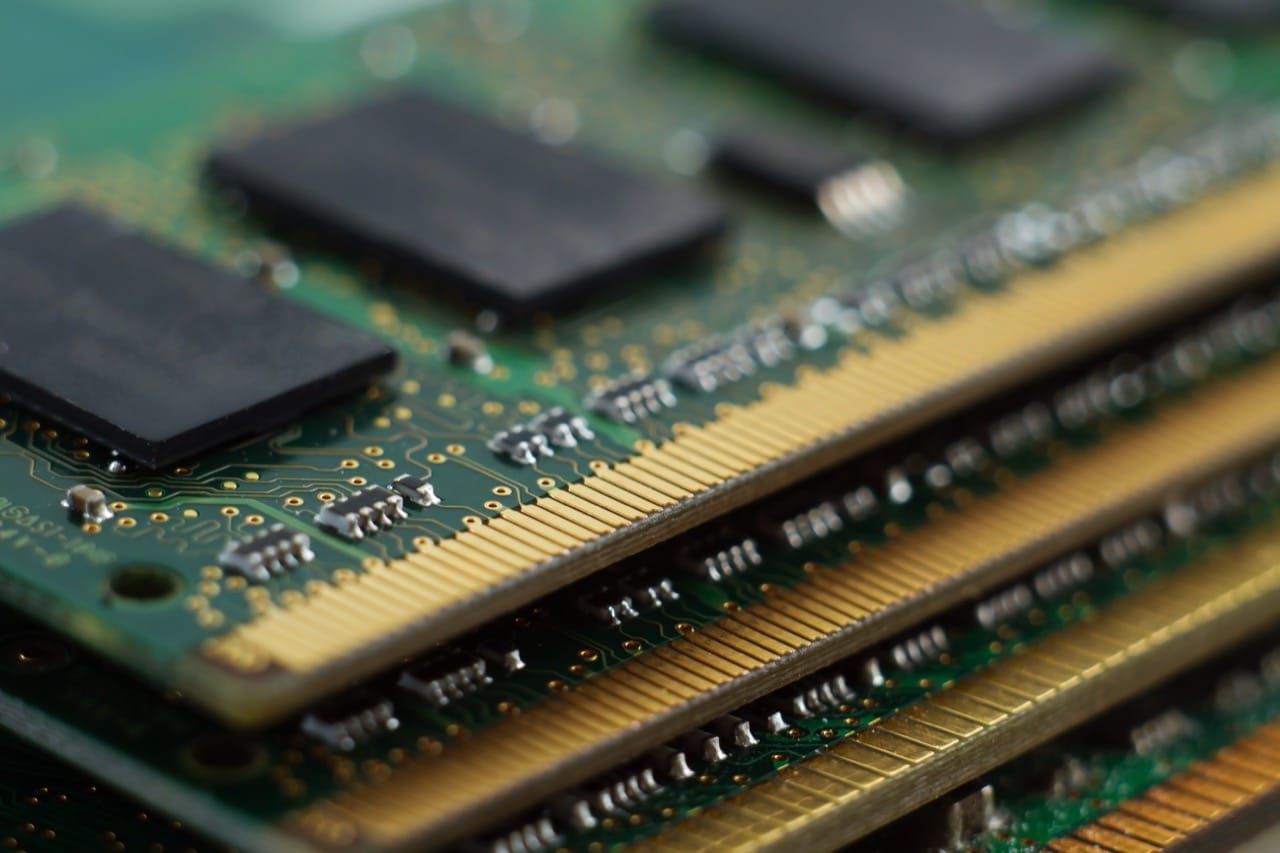

One of the most dramatic leaps came from the transistor and later the integrated circuit, which replaced fragile tubes with tiny solid-state switches. This shift made computers more reliable and opened the door to miniaturization. The famous observation known as Moore’s law captured the feel of the era: transistor counts on chips tended to grow rapidly over time, making each new generation more capable. Today it is normal for a consumer device to contain chips with billions of transistors, a number so large it is hard to visualize. If each transistor were a light switch, a single phone would rival the switch count of an entire city.

Speed records have their own mythology. Early supercomputers were celebrated for performing millions of operations per second; now the fastest machines measure performance in petaflops and exaflops, meaning quintillions of calculations per second. These numbers matter because they enable tasks like climate simulation, drug discovery, and training large AI models. What is striking is how often yesterday’s supercomputer performance becomes tomorrow’s everyday capability. A modern laptop can outperform machines that once represented national prestige.

Storage has followed a similar pattern of “biggest bytes.” The first hard drives stored only a few megabytes and could be the size of a refrigerator. Now tiny flash chips in phones and cameras hold hundreds of gigabytes, and consumer solid-state drives offer multiple terabytes. At the data-center scale, cloud storage turns the idea of a single “big drive” into an ocean of distributed disks, with redundancy and error correction quietly keeping your photos and messages safe even when hardware fails.

Communication milestones are equally extreme. The first long-distance electronic messages traveled over telegraph lines; then telephones carried voices, radio carried broadcasts, and satellites helped connect continents. The internet added a new twist: it is not one network but a system of networks that agree on shared rules. Those rules allow a video call to cross oceans in fractions of a second, even if the path hops through many routers. Bandwidth records keep climbing, but so does the cultural impact of connectivity, from viral memes to global collaboration.

Programming languages and software culture have their own superlatives. Some languages become milestones not because they are the fastest, but because they become the most widely used, shaping how people think about problems. Popularity can shift as new platforms appear, from the rise of web development to the explosion of data science. Meanwhile, open-source projects show a different kind of scale: thousands of contributors, millions of users, and codebases that evolve like living ecosystems.

What makes tech trivia fun is that record-breaking numbers are rarely just bragging rights. Firsts create new possibilities, fastest machines reveal what is computationally feasible, and biggest storage and networks change what society expects to be instant and available. Behind every extreme is a chain of practical engineering choices, clever compromises, and occasional weird experiments that somehow worked. If you think like an engineer, the real thrill is noticing how quickly the impossible becomes ordinary.